In HCX R145, the HCX licensing included with VMC on AWS was enriched with multiple HCX Enterprise features. One of the Enterprise features is Mobility Optimized Networking (MON).

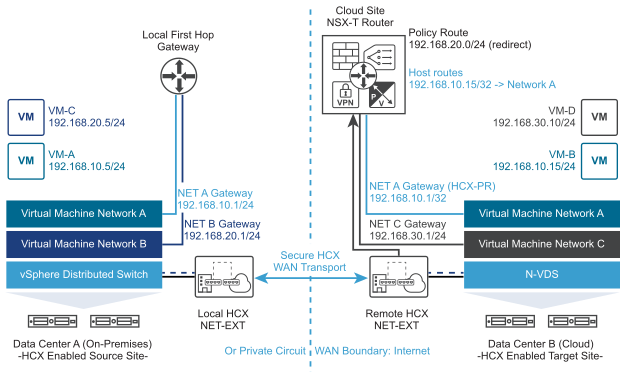

When you stretch a network from on-prem into VMC with HCX Advanced, the gateway IP remains on-prem. This means that traffic in VMC must traverse the WAN link to reach its gateway – a non-optimal configuration.

MON enables a virtual gateway on the VMC side, allowing a VM to stay in VMC on AWS for its gateway. This prevents the so-called ‘tromboning’ or ‘hairpinning’ of traffic and allows VM-to-VM traffic to remain in VMC on AWS.

To enable MON, you must first upgrade to R145. See the first part of my previous post on HCX RAV to upgrade HCX.

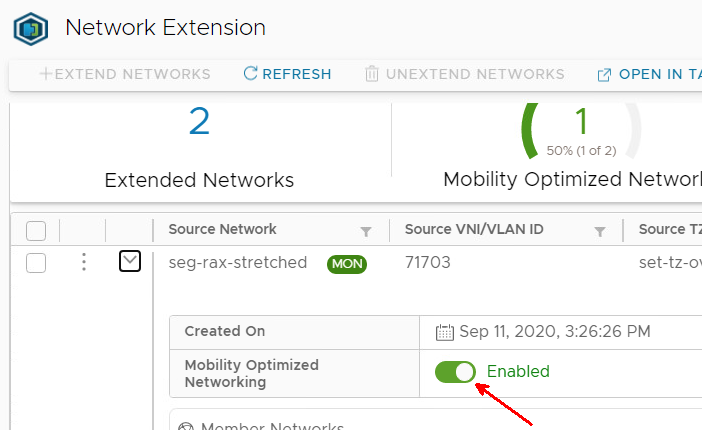

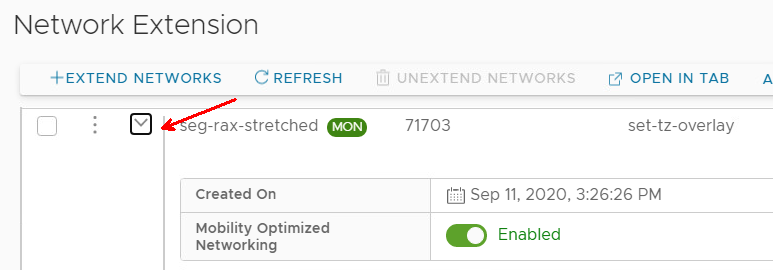

Once the upgrade is complete, enabling MON is as simple as flipping this switch to enabled.

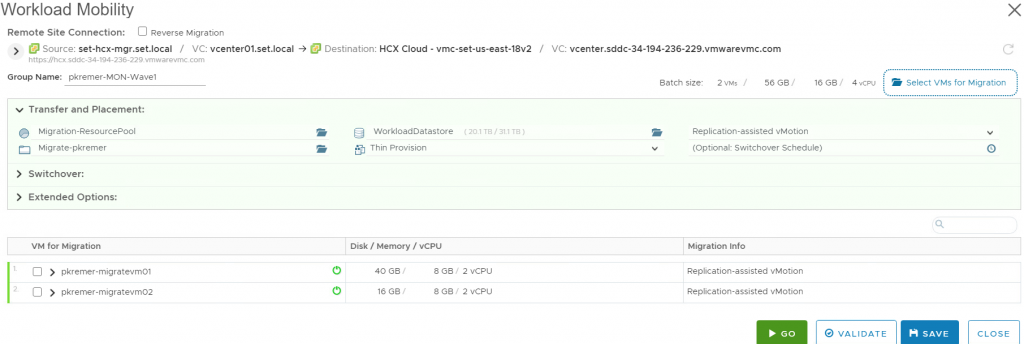

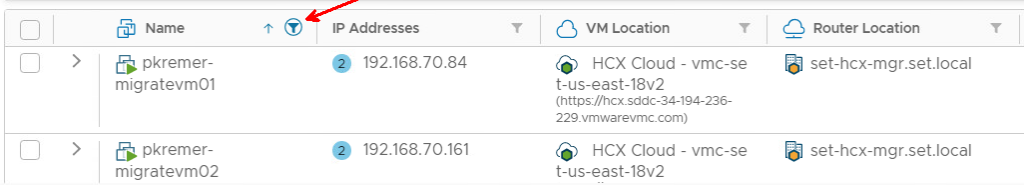

I have 2 VMs – a Windows and Linux VM that we are going to migrate. VMware tools are required for MON to function – make sure you have tools installed

Windows – 192.168.70.84

Linux – 192.168.70.161

Gateway IP address – 192.168.70.1

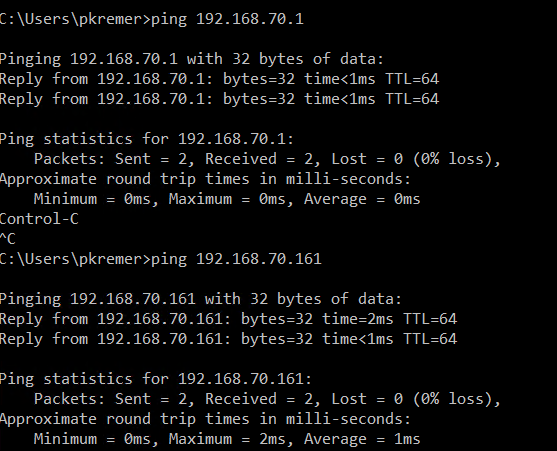

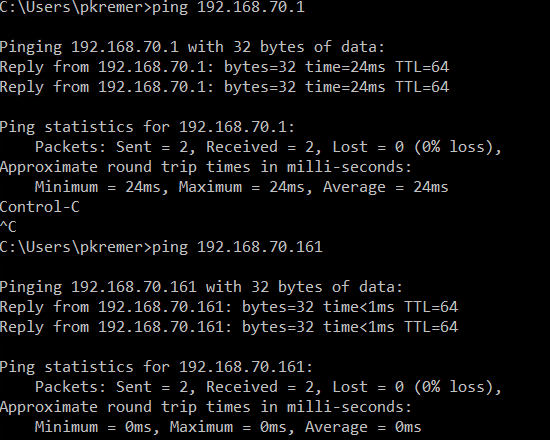

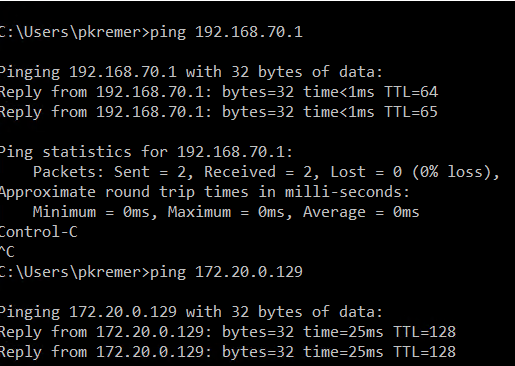

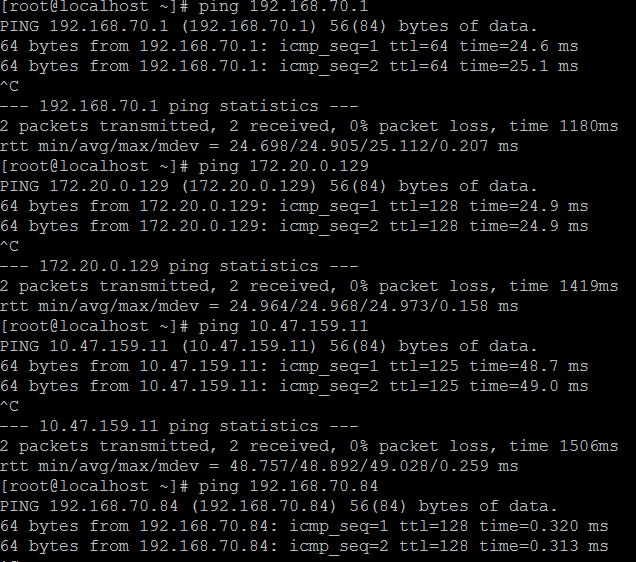

From an RDP session on the Windows machine, I ping the gateway IP and Linux IP, you see the low latency showing that the traffic stays on-prem for the ping.

I also ping the on-prem DNS server, <1ms latency

I start a RAV migration for both VMs – you can find full details on enabling RAV migrations in my previous post. You don’t have to do a RAV migration, you can do a bulk or vMotion instead if you want.

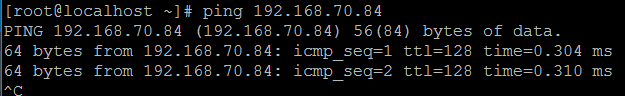

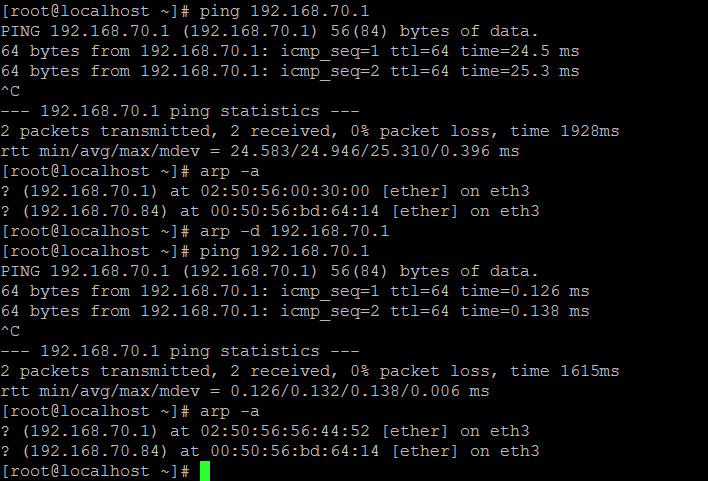

Now both of my VMs are in VMware Cloud on AWS. From my Windows machine I ping the gateway, and I get a latency of 24ms, because I am traversing the WAN connection to ping the gateway. I ping the Linux machine and I get a latency of < 1ms.

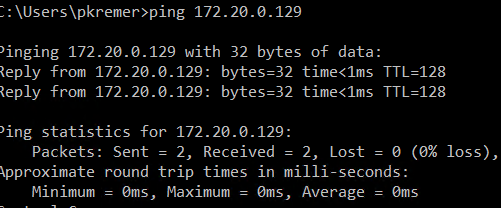

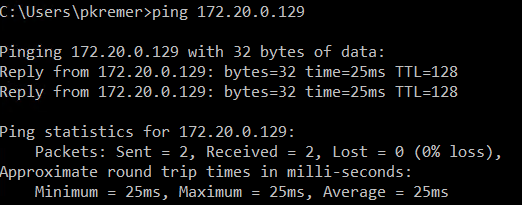

I ping the on-prem DNS server and I get 25ms latency

Now I will migrate the gateway. Find the extended network in on-prem HCX, click the down carat to expand the configuration.

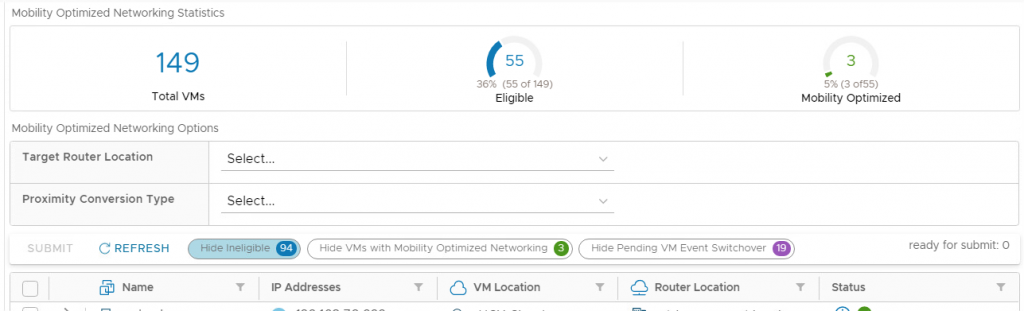

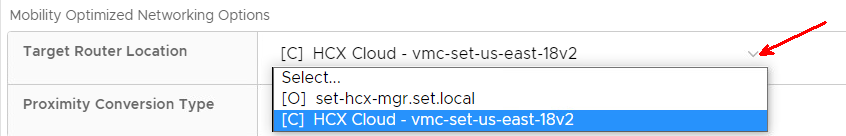

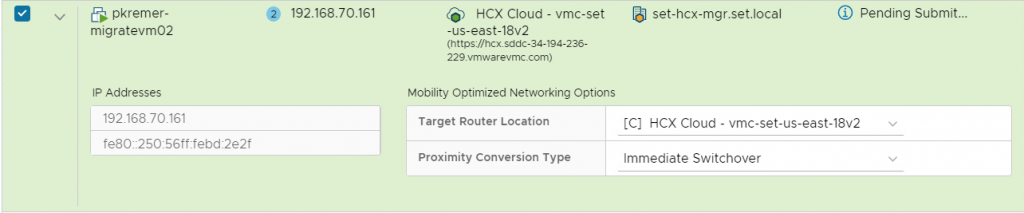

I scroll down and find the Mobility Optimized Networking Options

I use the name filter and find my two VMs. They both show as having migrated to VMC, but their router is on-prem.

I want to change the gateway for this VM to the cloud gateway in VMC.

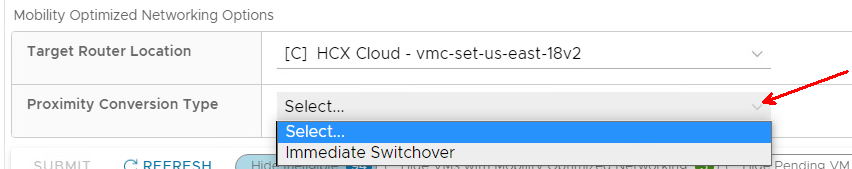

I want to make the change as soon as I hit submit.

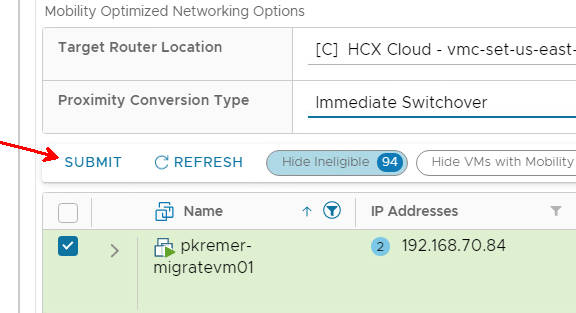

I hit submit.

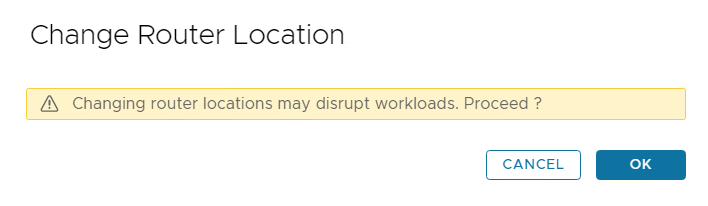

I get a warning that changing the gateway is disruptive.

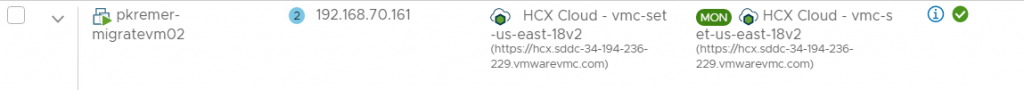

My gateway is now in VMC.

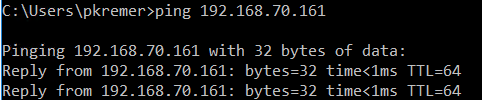

I ping the gateway IP and get <1ms latency – no trip across the WAN! I ping the on-prem DNS server and get the expected 25ms latency.

I ping the Linux server and even though we’re using different gateways, it doesn’t impact the L2 communication – it stays in VMC with <1ms latency.

Now I ping another DNS server in VMC and I get <1ms latency – proof that we can do inter-vlan routing without tromboning.

Now I switch to the Linux VM – the gateway for this VM is still on-prem.

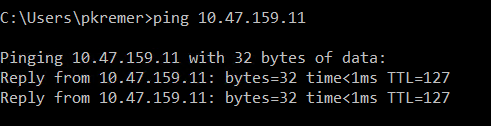

The gateway 192.168.70.1 is at 25ms latency – this is expected as it’s across the WAN. The on-prem DNS server 172.20.0.129 is also 25ms. The ping to 10.47.159.11, an IP address in VMC, is 49ms – this is the tromboning effect, the traffic traverses the WAN once to hit the gateway, then back to VMC to reach the segment.

A ping to the Windows VM stays in VMC as L2 communication.

I migrate the Linux gateway to VMC.

HCX shows that the gateway has moved.

I ping the gateway and it still shows that it’s going back on-prem. I check the ARP cache and find it is still trying to hit the on-prem gateway. I clear the ARP entry for gateway IP 192.168.70.1 and ping again – now I get <1ms latency, and a new ARP entry.

The likely culprit for this Linux problem is an old VMware Tools installation, but that is a topic to explore in another post.

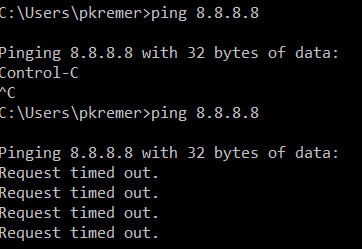

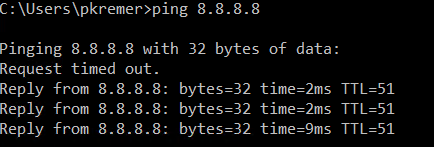

We are almost done, except one last problem – my Windows VM shows the no internet access icon in the system tray. I try pinging and sure enough, no internet.

Why is this? Prior to switching the gateway, we were always egressing from on-prem. Now we are egressing from VMC, and we are being blocked by the Compute Gateway Firewall. A quick firewall change allowing my stretched segment to egress to the internet and the ping succeeds.

Enjoy the new MON feature in VMC at no additional charge!

VMware Cloud on AWS - BGP Route Filtering with Postman -

[…] customer has run into a situation with Mobility Optimized Networking. They have a DX carrying HCX replication traffic, but their management runs across an IPSEC VPN. […]