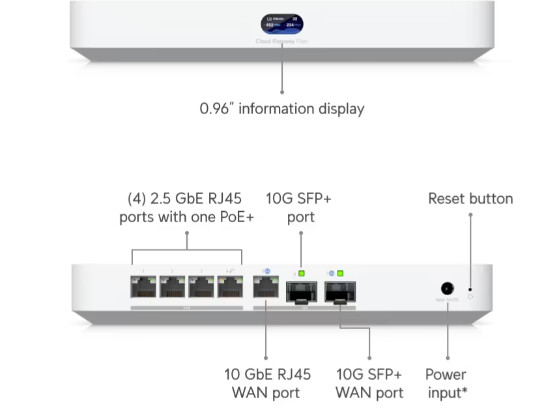

I recently got T-Mobile 2gbps fiber installed at home, and needed a Ubiquiti hardware upgrade to accommodate the new speeds. […]

Tag: upgrade

Homelab – 2012 to 2019 AD upgrade

I foolishly thought that I would quickly swap out my 2012 domain controllers with 2019 domain controllers, thus beginning a […]

New VMUG presentation posted

I posted today’s VMUG presentation in the VMUG section.

Android 4.1.2 – Unable to use GPS

I just got the Android 4.1.2 push to my Verizon Droid Bionic and suddenly my map application stopped working. When […]

vCenter 4.1 upgrade problem

I was upgrading vCenter from 4.0 U2 to 4.1 and installing it on a clean Windows 2008 64-bit server. The […]

Alarm problem after vCenter 4.1 upgrade

UPDATE 12/23/2010 VMware support confirms that there is a bug related to the vCenter 4.1 upgrade, it appears to be […]