In this AWS re:Post Article, I provide details on configuring granular permissions to limit the scope of virtual machine inventory […]

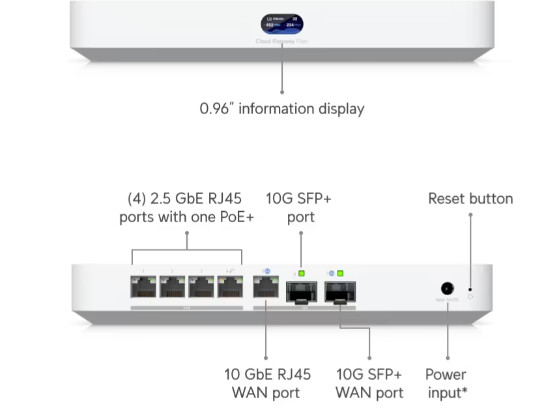

Upgrading from Ubiquiti USG-Pro to UCG-Fiber

I recently got T-Mobile 2gbps fiber installed at home, and needed a Ubiquiti hardware upgrade to accommodate the new speeds. […]

Detecting tornadoes with RadarScope

I rarely blog about anything outside of IT topics, but today I’m going to write about tornadoes. After the EF-3 […]

New Capabilities in AWS Transform for VMware

AWS Transform is generally available today! In this AWS blog post, I explore AWS Transform features available today.

Understanding the direction setting for VMware NSX firewall rules

In this AWS re:Post Article, I describe the purpose behind IN, OUT, and IN_OUT rules, with examples

Using Amazon Athena to read data captured by the AWS Application Discovery Agent

In this AWS re:Post article, I demonstrate enabling Athena export from Migration Hub, and retrieving data from the Discovery Agent […]

Configuring AWS Directory Service as an identity source for Amazon Q Developer transformation

In this AWS re:Post article, I walk you through configuring AWS Managed Microsoft AD, then using that as a federated […]

Exporting network configuration data with Import/Export for NSX

In this blog post, I talk about Import/Export for NSX, a new AWS Open Source tool that you can use […]

Getting started with Amazon Q Developer transformation capabilities for VMware

In this blog post, we explore how to get started with Amazon Q Developer transformation capabilities for VMware

RVTools 4.7.1 bug with VMware Cloud on AWS

In this re:Post Article, I show workarounds for a bug with RVTools 4.7.1 and VMware Cloud on AWS.