Introduction

I became aware of the VMware Event Broker Appliance Fling (VEBA) Fling in December, 2019. The VEBA Fling is open source code released by VMware which allows customers to easily create event-driven automation based on vCenter Server Events. You can think of it as a way to run scripts based on alarm events – but you’re not limited to only the alarm events exposed in the vCenter GUI. Instead, you have the ability to respond to ANY event in vCenter.

Did you know that an event fires when a user account is created? Or when an alarm is created or reconfigured? How about when a distributed virtual switch gets upgraded or when DRS migrates a VM? There are more than 1,000 events that fire in vCenter; you can trap the events and execute code in response using VEBA. Want to send an email and directly notify PagerDuty via API call for an event? It’s possible with VEBA. VEBA frees you from the constraints of the alarms GUI, both in terms of events that you can monitor as well as actions you can take.

VEBA is a client of the vSphere API, just like PowerCLI. VEBA connects to vCenter to listen for events. There is no configuration stored in the vCenter itself, and you can (and should!) use a read-only account to connect VEBA to your vCenter. It’s possible for more than one VEBA to listen to the same single vCenter the same way multiple users can interact via PowerCLI with the same vCenter.

For more details, make sure to check out the VMworld session replay “If This Then That” for vSphere- The Power of Event-Driven Automation (CODE1379UR)

Installing VEBA – Updated on March 3, 2020 to show the 0.3 release

If you notice screenshots alternating between an appliance named veba01 and veba02, it’s because I alternate between the two as I work with multiple VEBA versions.

The instructions can be found on the VEBA Getting Started page.

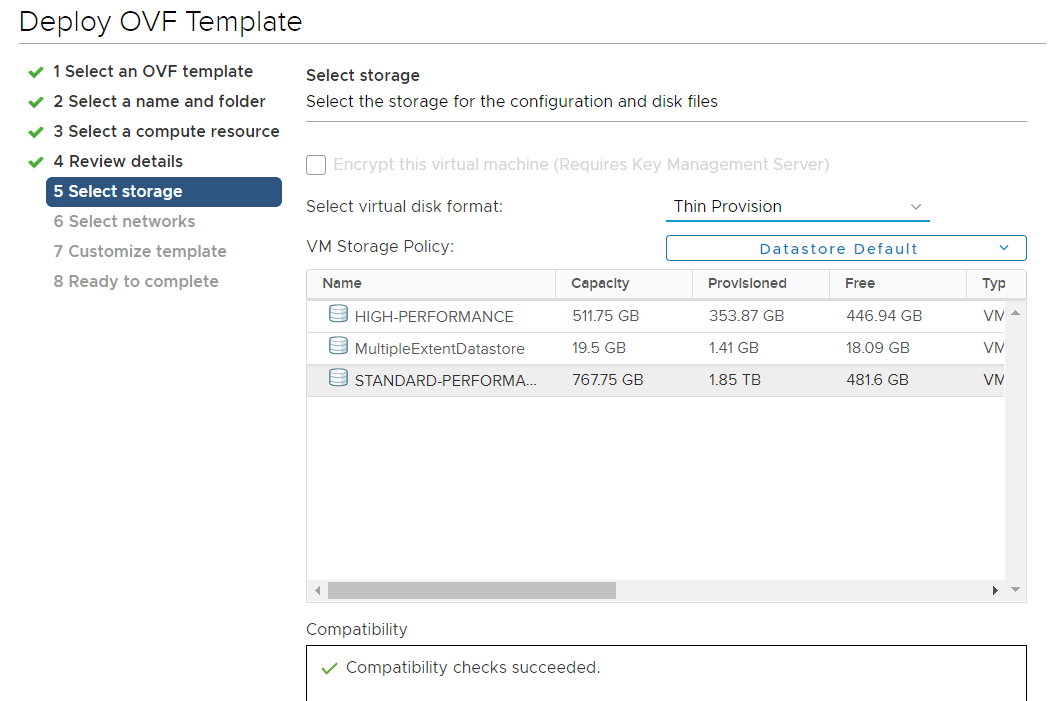

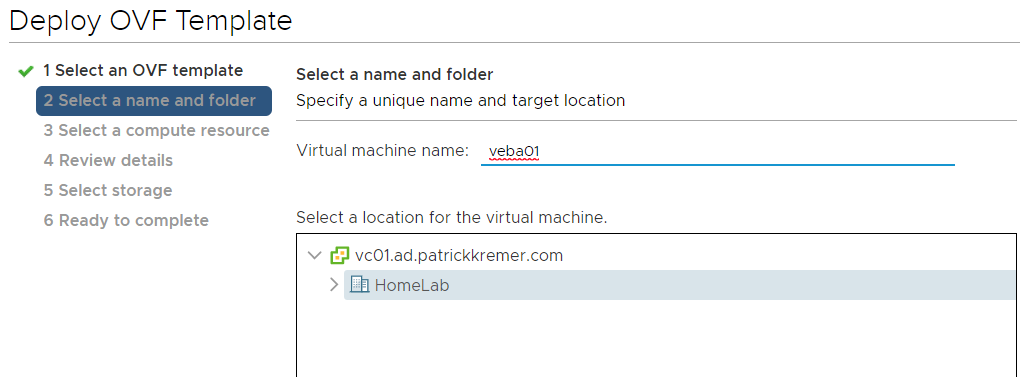

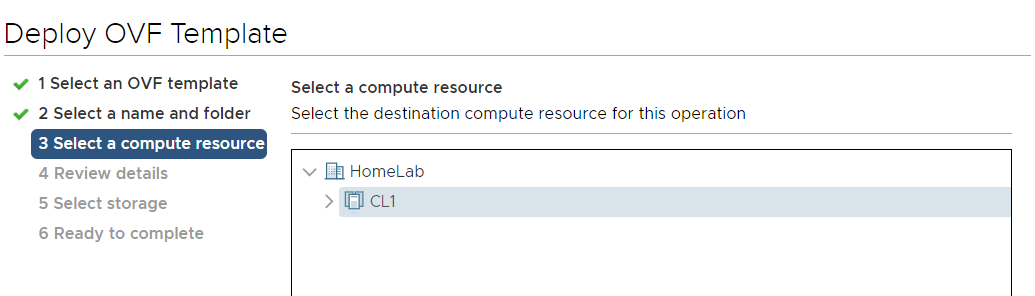

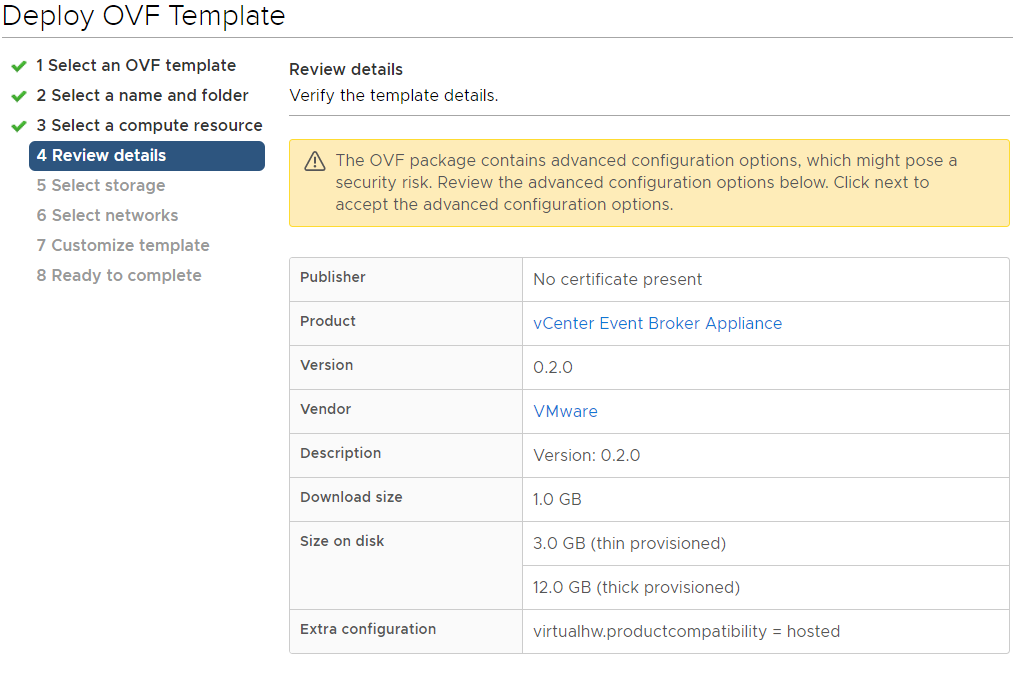

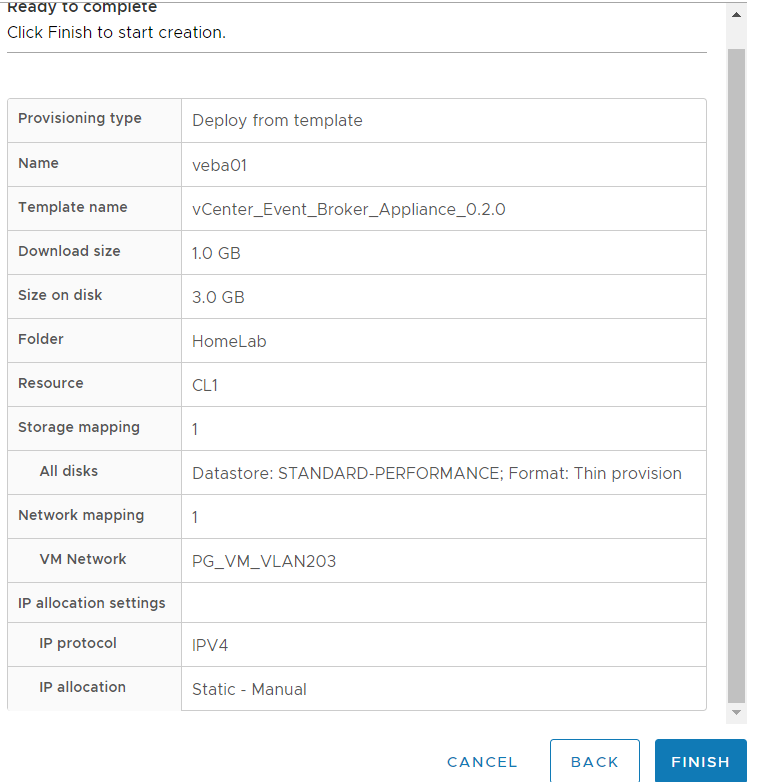

First we download the OVF appliance from https://flings.vmware.com/vcenter-event-broker-appliance and deploy it to our cluster

VMC on AWS tip: As with all workload VMs, you can only deploy on the WorkloadDatastore

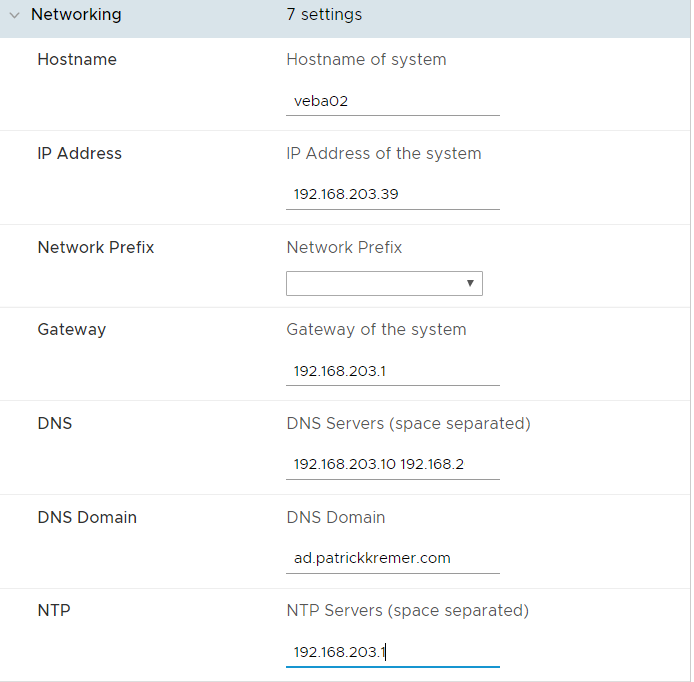

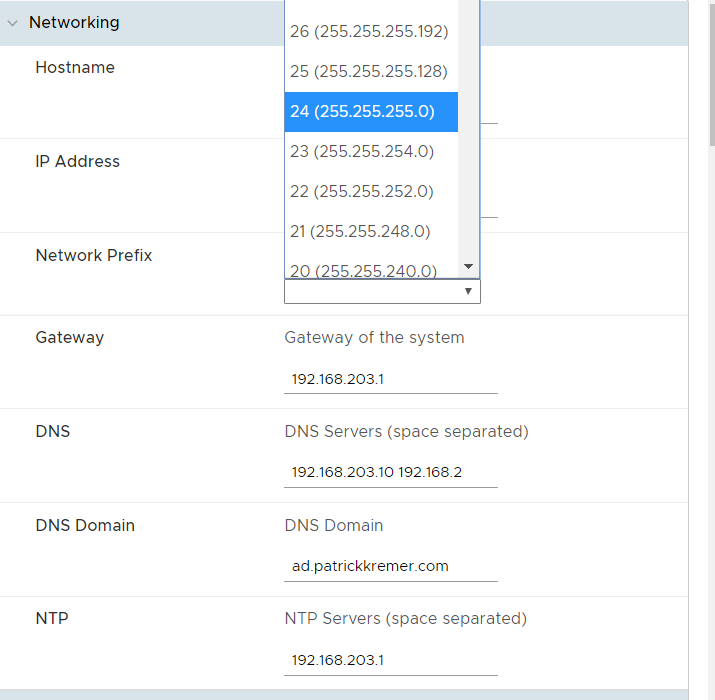

I learned from my first failed 0.1 VEBA deployment to read the instructions – it says the netmask should be in CIDR format, but I put in 255.255.255.0. In 0.3, the subnet mask/network prefix is a dropdown, eliminating this problem.

Pay attention to DNS and NTP settings – they are space separated in the OVF, the instructions have said ‘space separated’ since 0.2 but it’s important to enter them correctly here. NTP settings were new in 0.2

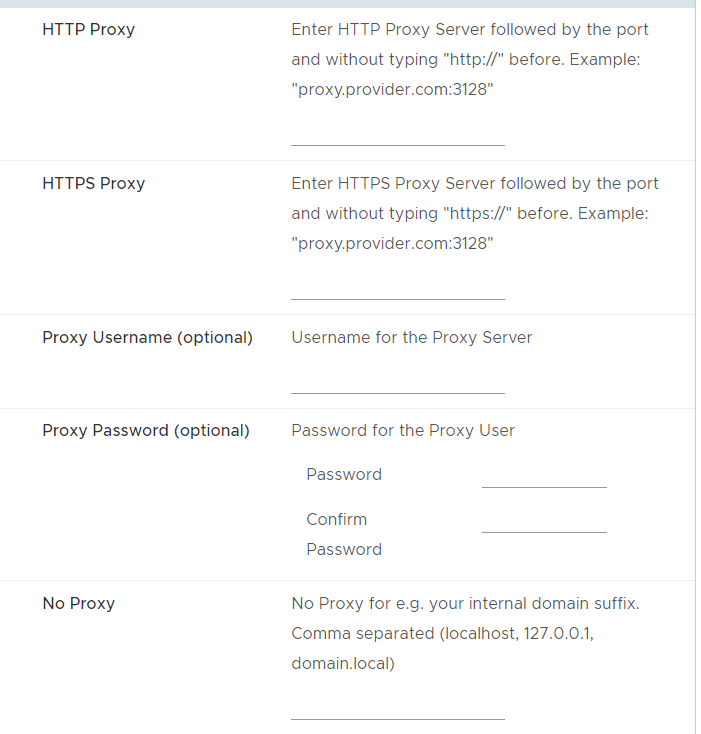

Proxy support was new in 0.2. I don’t have a proxy server in the lab so I am not configuring it here.

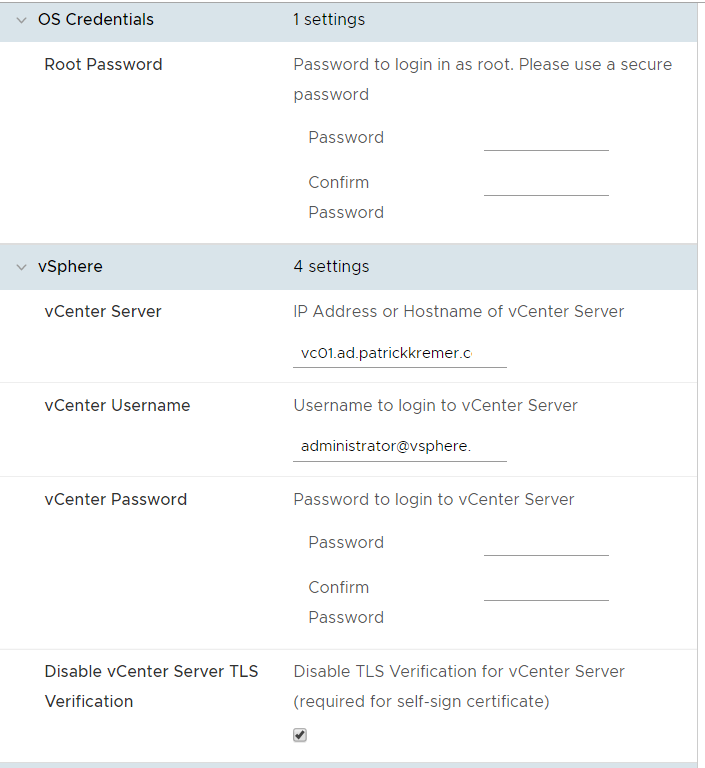

These settings are all straightforward. Definitely disable TLS verification if you’re running in a homelab. One gotcha are passwords – I used blank passwords for the purposes of this screenshot, but you can move ahead with blank passwords. This resulted in a failure after the appliance booted because it couldn’t connect to vCenter. On the plus side it lead to my capture of how to troubleshoot failures, which is shown further down in this post.

PROTIP: If you’re brand new to this process, it’s easiest to get this working with an administrator account. It is best practice to use a read-only vCenter account. You can easily change this credential after everything is working by following the procedure at the bottom of this post – edit /root/event-router/config.json (file moved in version 0.4) /root/config/event-router-config.json and restart the pod as shown in the directions.

New in 0.3, you can decide whether you want to use AWS EventBridge or OpenFaaS as your event processor. EventBridge setup is covered in Part Ia of this series. For this setup, we choose OpenFaaS.

Since we’re not configuring EventBridge, we leave this section blank.

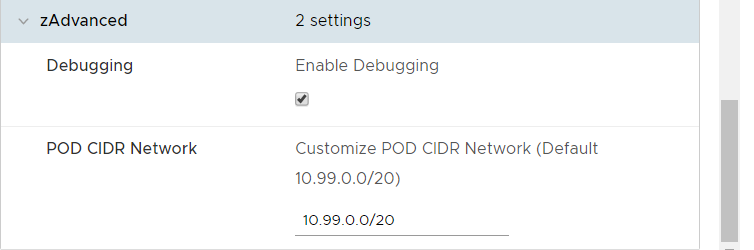

The POD CIDR network is an important setting new in 0.2. This network is how the containers inside the appliance communicate with each other. If the IP that you assign to the appliance is in the POD CIDR subnet, the containers will not come up and you will be unable to use the appliance. You must change either the POD CIDR address or the appliance’s IP address so they do not overlap.

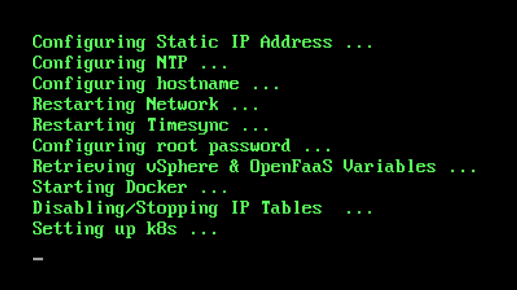

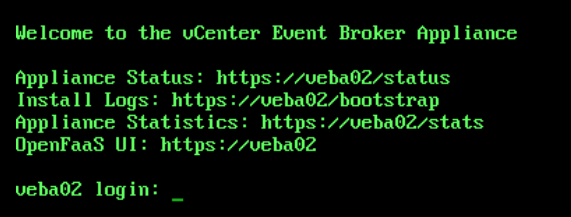

This is what the VEBA looks like during first boot

If you end up with this, [IP] in brackets instead of your hostname, something has failed. Did you use a subnet mask instead of CIDR? Did you put comma-separated DNS instead of space? Did you put in the incorrect gateway? If you enabled debugging at deploy time, you can look at /var/log/boostrap-debug.log for detailed debug logs to help you pinpoint the error. If not, see what you can find in /var/log/boostrap.log

There is an entire troubleshooting section for this series. When the pod wasn’t working despite successful deployment, I needed to determine why.

Enabling SSH on the appliance is covered in the troubleshooting section. You could also use the VM console without enabling SSH.

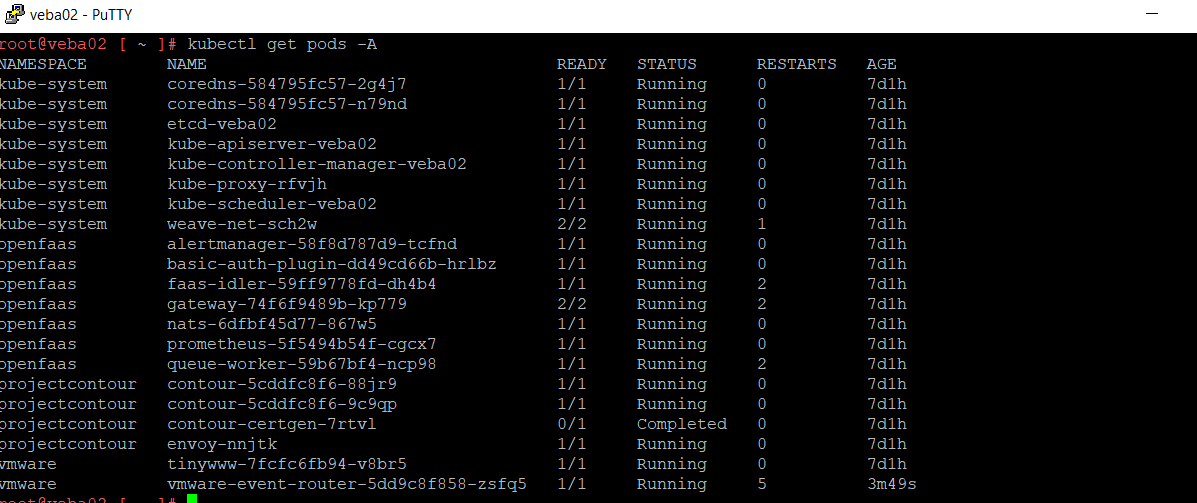

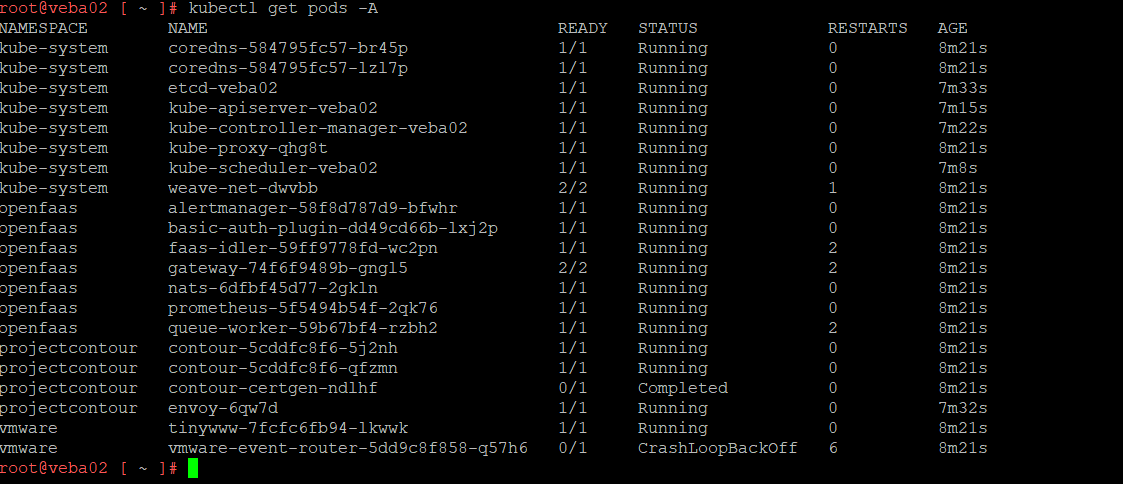

kubectl get pods -A lists out the available pods

The pods that is crashing is the event router pod.

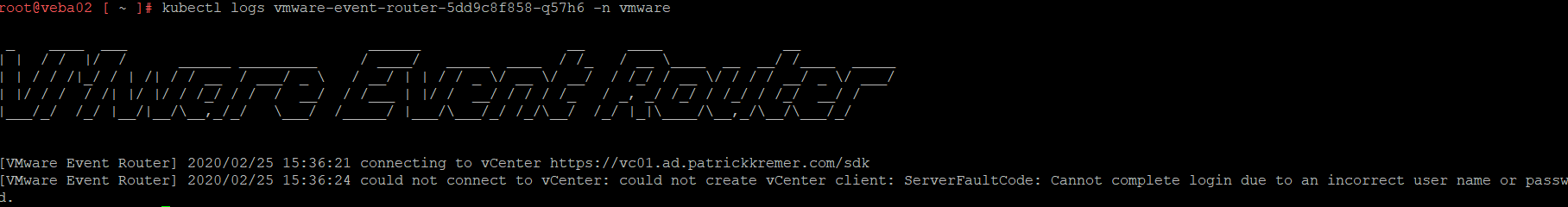

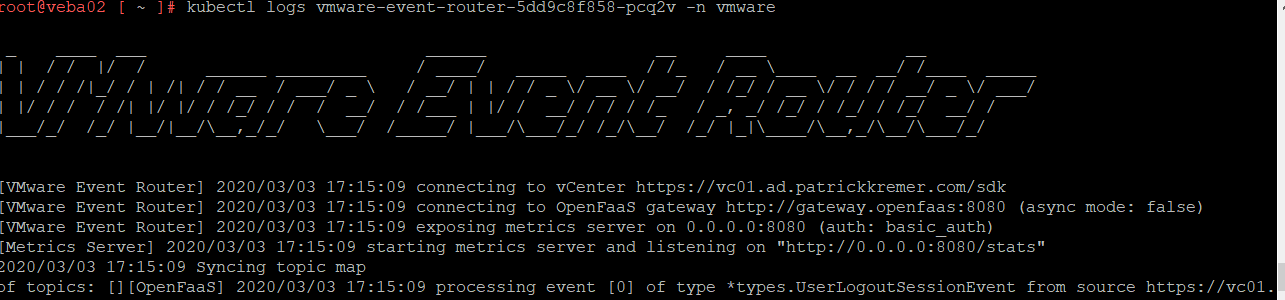

kubectl logs vmware-event-router-5dd9c8f858-q57h6 -n vmware will show us the logs for the event router pod. We can see that the vCenter credentials are incorrect.

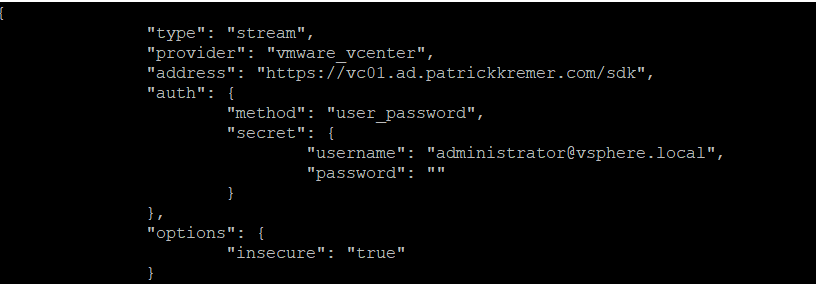

We need to edit the JSON config file for Event Router.

vi /root/config/event-router-config.json

![]()

Oops. The password is blank

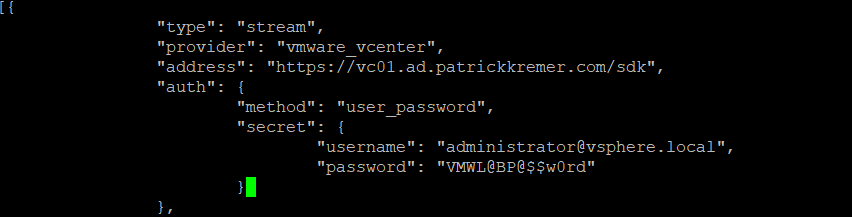

Fix the password

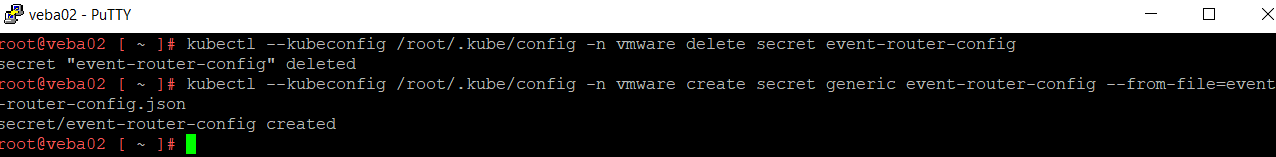

Delete and recreate the pod secret

kubectl -n vmware delete secret event-router-config

kubectl -n vmware create secret generic event-router-config --from-file=event-router-config.json

Get the current pod name with

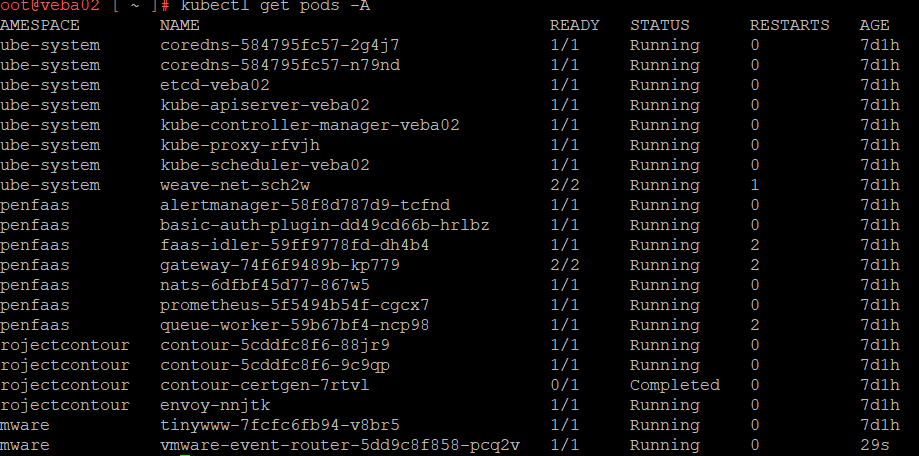

kubectl get pods -A

Delete the event router pod

kubectl -n vmware delete pod vmware-event-router-5dd9c8f858-zsfq5

![]()

The event router pod will automatically recreate itself. Get the new name with

kubectl get pods -A

Now check out the logs with

kubectl logs vmware-event-router-5dd9c8f858-pcq2v -n vmware

We see the event router successfully connecting and beginning to receive events from vCenter

Now we have a VEBA appliance and need to configure it with functions to respond to events. Note that it may take some time for the appliance to respond to http requests, particularly in small homelab. It took about 4 minutes for it to fully come up after succesful boot in my heavily overloaded homelab. One additional gotcha: the name you input at OVF deployment time is the name encoded in the SSL certificate, so if you input “veba01” as your hostname, you cannnot then reach it with https://veba01.fqdn.com.

In part II of this series, I will demonstrate how I configured my laptop with the prereqs to get the first sample function working in VEBA.

VMware Event Broker Appliance – Part XIII – Deploying Go functions | Patrick Kremer

[…] VEBA and the OpenFaas CLI. I covered VEBA in Part I and OpenFaaS CLI in Part II. You also need Docker, which I cover in Part […]

vCenter Event Broker Appliance – Part Ia – AWS EventBridge Deployment | Patrick Kremer

[…] ← Previous […]

vCenter Event Broker Appliance – Part III – Tags and Clones | Patrick Kremer

[…] Part I of this series, we explored how to deploy the VEBA appliance. In Part II, we looked at setting up a Windows […]

vCenter Event Broker Appliance – Part II – Sample Code Prereqs | Patrick Kremer

[…] ← Previous […]